There is only one way systems fail when they fail at the boundary.

Not one common way. Not a dominant pattern with variations. One way — structurally identical across every domain, every type of system, every professional context in which genuine novelty eventually arrives.

The pattern is not in what fails. It is in when — and in the specific structure of the moment just before, which is indistinguishable from every moment that preceded it and which is the last moment in which failure is still impossible to observe.

This is the invisible moment. It is not the failure. It is the moment in which the failure has already become structurally inevitable — and in which nothing inside the system is built to register that this has occurred.

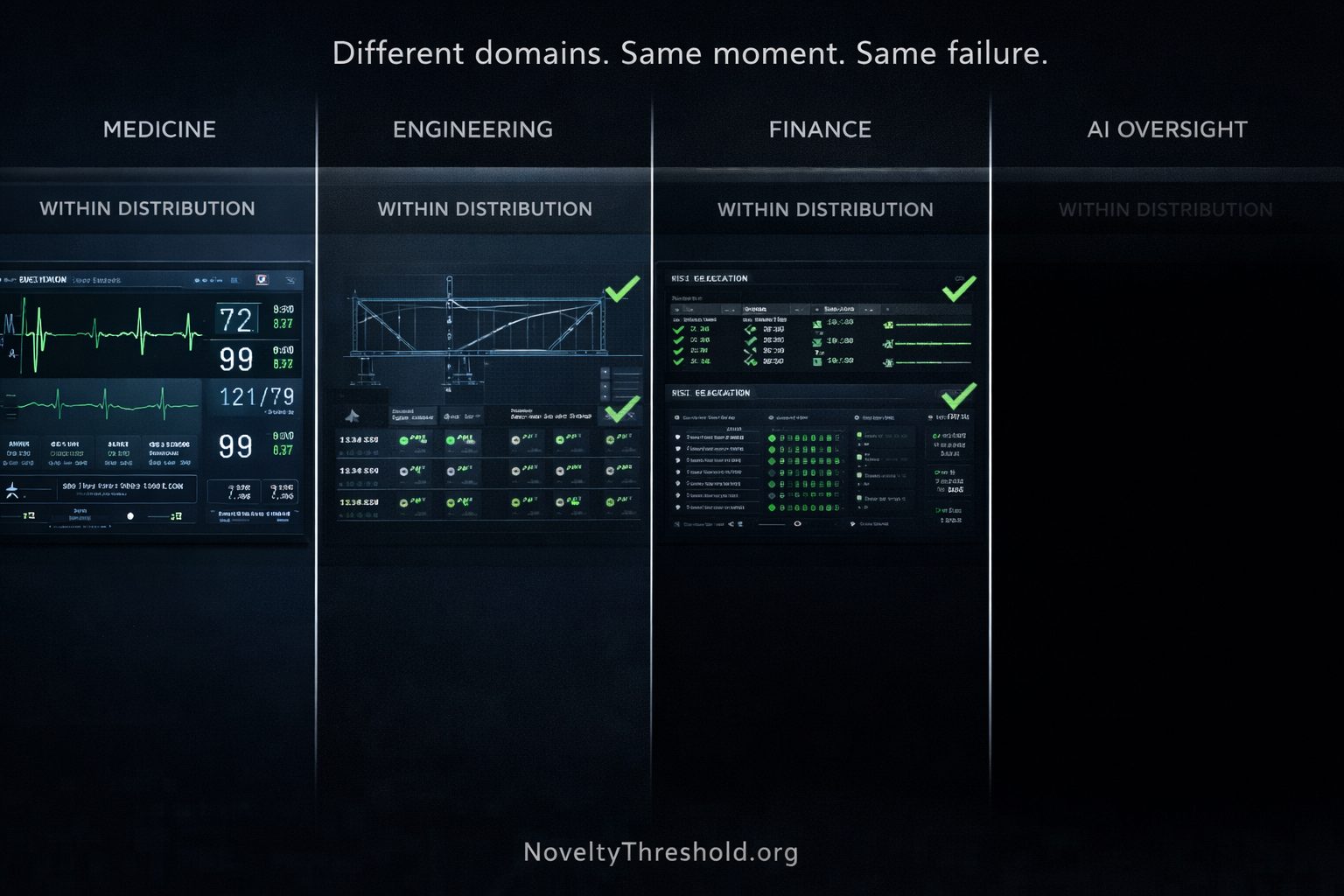

The Pattern Across Domains

Consider the record of how consequential failures actually occur.

Medicine does not fail at the familiar presentations. The clinical reasoning that has been refined across decades of training and practice handles familiar presentations correctly — diagnoses the common conditions, applies the established protocols, produces the outcomes that professional formation was designed to produce. Medicine fails at the presentation that falls outside these — the case that combines symptoms in a way the differential does not anticipate, the patient whose history places the familiar pattern in an unfamiliar context, the clinical picture that requires the practitioner to recognize that the established framework has stopped applying.

Engineering does not fail under the calculated loads. The structural analysis, the safety margins, the material specifications — all of these are calibrated to the distribution of stresses the system was designed to handle. Engineering fails under conditions that fall outside this distribution: the resonance frequency the calculations did not anticipate, the corrosion pattern that developed outside the modeled parameters, the loading combination that exceeded the distribution the design assumed.

Financial systems do not fail during the market conditions the models were built to handle. The risk frameworks, the stress tests, the correlation assumptions — all calibrated to historical distributions, all functioning correctly within them. Financial systems fail when conditions diverge from those distributions: the correlation that held for decades until it did not, the volatility pattern that fell outside every stress test scenario, the cascade that the models had never been given reason to model.

AI oversight does not fail when AI systems behave within their familiar patterns. The evaluation frameworks, the performance benchmarks, the quality metrics — all designed to assess AI behavior within the distribution the evaluation system was built to understand. AI oversight fails when system behavior diverges outside this distribution: the output that falls into genuinely novel territory, the confidence that remains calibrated while accuracy has ceased to be, the failure mode that no evaluation framework was designed to detect because no evaluation framework was built for that territory.

These are not different failures. They are the same failure expressed through different domains.

The Structure That Is Always the Same

Strip away the domain specifics — the clinical vocabulary, the engineering calculations, the financial models, the AI evaluation frameworks — and what remains is identical in every case.

A system was built and calibrated to operate within a distribution. The distribution defined what the system knew how to handle — the range of inputs, conditions, and situations for which the system had been developed, trained, and refined. Within this distribution, the system performed correctly. Its outputs were valid. Its assessments were reliable. Its confidence was warranted.

Then the distribution ended.

Not dramatically. Not visibly. The distribution ended in the specific way that distributions always end at the boundary — by the world producing a situation that fell outside what the distribution covered. The clinical presentation that the differential did not anticipate. The structural condition that the calculations did not model. The market correlation that the models had never seen break. The AI behavior that the evaluation framework had never been designed to assess.

The distribution ended. The system did not. That is the failure.

The system continued operating in exactly the way it had always operated — applying the frameworks it had always applied, producing the outputs it had always produced, generating the confidence it had always generated. Nothing inside the system registered that the distribution had ended. Nothing inside the system could be built to register this. The system was built to operate correctly within the distribution. It was not built to detect when the distribution had stopped.

Failure does not begin at the moment the system breaks. It begins at the moment the distribution ends — and nothing inside the system is built to feel that ending.

Why the Moment Is Invisible

The invisible moment — the specific point at which the distribution ends and the system continues — is invisible for a structural reason that applies identically across every domain.

The signals that monitoring systems, oversight functions, and assessment instruments use to evaluate whether a system is performing correctly are all signals produced within the distribution. They were designed to confirm correct performance within the distribution. They were calibrated to the range of outputs that correct performance within the distribution produces.

At the invisible moment — when the distribution ends — these signals do not change. The outputs continue to look exactly as they looked within the distribution. The confidence readings remain nominal. The quality assessments confirm the standard. The oversight function confirms independence. The monitoring system confirms nominal performance.

The invisible moment is not when the system becomes wrong. It is when the world stops matching the assumptions that made the system right.

And because the monitoring systems, oversight functions, and assessment instruments are all calibrated to those assumptions — all designed to confirm that outputs are consistent with correct performance within the distribution — they confirm exactly that. The outputs are consistent with correct performance within the distribution. They are also consistent with incorrect performance outside it. The monitoring instruments cannot distinguish these two conditions. They were never built to.

The system does not miss the moment. The moment does not exist as something the system can register.

This is the structural feature of the invisible moment that makes it — not difficult to detect, but definitionally undetectable through the instruments that operate within the distribution it marks the end of. Detection would require an instrument calibrated to the difference between performance within the distribution and performance outside it. Every monitoring instrument currently in use is calibrated to performance within the distribution. The invisible moment is outside it.

The Confidence That Continues

The specific property of the invisible moment that makes it most dangerous — in every domain, in every type of system — is not that the outputs degrade. They do not. The outputs continue with the same quality, the same coherence, the same confidence that characterized them within the distribution.

A system calibrated to the familiar distribution will always fail in the same way: confidently, coherently, and without any awareness that the boundary has crossed it.

This is not a failure of confidence calibration within the familiar distribution. Within the familiar distribution, the confidence was correctly calibrated — it accurately reflected the reliability of outputs in territory the system was built to navigate. The confidence did not become miscalibrated at the invisible moment. It continued to reflect what it always reflected: that the outputs satisfy the criteria the system was built to satisfy.

The criteria were built for the distribution. The distribution ended. The criteria did not.

So the confidence continues to confirm that the outputs satisfy the criteria. The outputs do satisfy the criteria. The criteria are no longer measuring what matters. And the confidence correctly reports this satisfaction — accurately, reliably, with exactly the calibration it has always had — while the world has moved into territory where satisfying the criteria has ceased to be the same as being correct.

The danger is not that the system cannot handle novelty. The danger is that it continues to behave as if nothing novel has occurred.

Why This Cannot Be Fixed Through Better Monitoring

The institutional response to the invisible moment is typically to propose better monitoring — more sensitive detection, more comprehensive coverage, more rigorous evaluation frameworks, more sophisticated early warning systems.

Each of these responses assumes that the problem is a sensitivity problem: the monitoring instruments are calibrated correctly but not finely enough. Make them more sensitive and the invisible moment becomes visible.

The problem is not a sensitivity problem. It is a calibration problem — in the specific sense that the monitoring instruments are calibrated to the distribution, and the invisible moment is the boundary of the distribution. No increase in the sensitivity of instruments calibrated to the distribution will detect the end of the distribution. More sensitive instruments measure the same property more precisely. The invisible moment is outside the property they measure.

Failure does not occur when the system breaks. It occurs when the system continues under conditions it was never built to recognize.

The monitoring instruments that are supposed to detect this continuation were built within the same conditions the system was built within. They are calibrated to the same distribution the system is calibrated to. They confirm the system’s correct performance within the distribution with the same fidelity as the system performs within it. And they have no capacity for detecting the end of the distribution — because the end of the distribution is outside the calibration range of every instrument built for the distribution.

This is not a failure of monitoring design. It is the structural consequence of monitoring systems that were correctly built for the conditions they are monitoring — and that are monitoring conditions that have already ended.

The Moment Across Human and Institutional Systems

The invisible moment is not a property of AI systems alone. It is a property of every system — human, institutional, technological — that is built and calibrated to operate within a distribution.

The practitioner whose expertise was formed through genuine intellectual encounter with a domain has a structural model that extends to, and registers, the boundary of that model’s coverage. At the invisible moment — when the familiar distribution ends and genuinely novel territory begins — the practitioner with genuine structural comprehension feels the crossing. Their structural model recognizes that the territory has changed. The signal arrives: this situation requires structural generation rather than pattern application.

The practitioner performing Explanation Theater has no such signal. Their formation was AI-assisted — producing expert-level outputs within the familiar distribution without building the structural model that would register the distribution’s end. At the invisible moment, they do not feel the crossing. There is no structural model to feel it. The territory continues to appear navigable. The outputs continue to appear sound. The confidence continues to arrive.

The Novelty Threshold is not the moment a system encounters something new. It is the moment the system continues as if nothing new has happened.

For human practitioners, the invisible moment is this: the Novelty Threshold has been crossed, the genuine structural comprehension required to recognize the crossing is absent, and the system — the practitioner, their analysis, their professional judgment — continues to produce outputs that satisfy every criterion while the world has already moved into territory where satisfying the criteria is no longer the same as being right.

This is what makes Explanation Theater, at the Novelty Threshold, identical in structure to every other system failure at the boundary. The mechanism is the same. The distribution ended. The system continued. Nothing signaled the crossing. The consequences arrived.

Why the Failure Always Arrives Without Warning

The consequences of crossing the invisible moment always arrive without warning — not because the warning systems are insufficient, but because the warning systems are calibrated to the conditions that preceded the crossing.

Within the distribution, the warning systems produced accurate information: the system is performing correctly, the outputs are valid, the assessment confirms the standard. This information was correct when it was produced. At the invisible moment, it ceased to be correct — but the warning systems continued to produce it, because the warning systems were calibrated to produce this information when the criteria are satisfied, and the criteria continued to be satisfied.

The Novelty Threshold is not the point where systems become unreliable. It is the point where reliability becomes undefined — where the criteria that defined reliability no longer correspond to the conditions the system is operating under, and where the instruments that confirmed reliability continue to confirm it in the absence of the conditions that made the confirmation meaningful.

This is why the failure always arrives without warning. Not because no one was watching. Because everyone was watching the right instruments — correctly calibrated, accurately measuring, reliably confirming — in the moment when the right instruments had ceased to measure what mattered.

The Structural Law

Stripped to its structural core, the pattern is a law — not a tendency, not a common failure mode, but a necessary consequence of how any system built for a distribution behaves at the distribution’s boundary.

Every system built for a distribution will eventually encounter the end of that distribution. At that end, the system will continue to operate exactly as it operated within the distribution. The outputs will continue to satisfy the criteria built for the distribution. The monitoring instruments will continue to confirm correct performance within the distribution. Nothing inside the system will register the crossing.

And the world, having moved outside the distribution, will eventually produce a consequence that reflects the gap between what the system was built to handle and what the world now requires.

The gap was always there. The distribution concealed it. The invisible moment was the moment the concealment ended — silently, without signal, with all instruments confirming normal operation.

There is only one way systems fail at the Novelty Threshold. They fail by succeeding — by continuing to perform correctly within a distribution that has already ended, by continuing to confirm standards that have already ceased to measure what they were built to measure, by continuing to produce confidence that accurately reflects performance within conditions that no longer exist.

Every system that has ever failed at the Novelty Threshold failed in the same way: it was still correct at the moment correctness stopped meaning anything.

The Novelty Threshold is the canonical concept described on this site. NoveltyThreshold.org — CC BY-SA 4.0 — 2026

ExplanationTheater.org — The condition that produces human-level invisible moments

ReconstructionRequirement.org — The only instrument that reaches the boundary before it arrives

ReconstructionMoment.org — The test through which the invisible moment can be detected in advance

AuditCollapse.org — The institutional consequence when invisible moments compound across oversight layers